Is Facebook Really Building a Bulgarian Unit?

The short answer is not exactly. As part of its European expansion and strategy to prevent the spread of manipulative news and violating posts, the largest social network Facebook is contracting an outsourcing company in Sofia. The local unit, operated by customer support company Telus, will be responsible for the control and moderation of reported posts and information, local publication Capital.bg reported. Up to 150 employees will be hired to monitor content in three languages Turkish, Russian and Kazakh.

Across Facebook worldwide 30K people are working on issues related to safety and security, and half of them are focused on reviewing reported posts or content that violates the community standards of the platform – be it false and misleading posts, or hate speech. In 2018, through the same mechanism – with a local outsourcing partner, Facebook opened up a content control office in Barcelona. The company also has similar operations in Germany.

In addition to human supported reviews, the platforms applies artificial intelligence algorithms to detect violating behavior and take down content and pages.

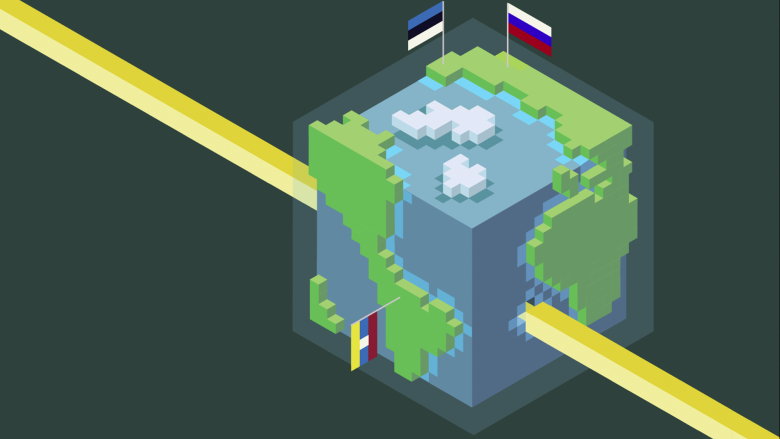

Facebook’s tactics include blocking and removing fake accounts, finding and removing bad actors, limiting the spread of false news and misinformation and an attempt to bring transparency to political advertising. That is what Facebook has recently shared in a blogpost regarding its preparing for the upcoming elections for European Parliament and the Ukrainian President Elections in 2019.

The fact-checking efforts

The company is also expanding its third party fact-checking program, which covers 16 languages and is usually done in collaboration with established fact-checking organizations like the UK Full Fact. Facebook has also recently rolled out the ability for fact-checkers to review photos and videos, in addition to article links because according to the company multimedia-based misinformation is making up a greater share of false news. When a fact-checker rates a post as false, it gets down-ranked in News Feed, which significantly reducing its distribution. This stops a post from spreading and reduces the number of people who see it, Facebook states. The post, however, is not deleted and it could still be found.

Facebook will be also launching a new tool aiming to prevent foreign interference in the upcoming elections and make political advertising on Facebook more transparent. According to the company, advertisers will need to be authorized before purchasing political ads and far more information about the ads themselves will be made available for people to see. The tool will be rolled out globally in June.